Bridge the gap between features shipped and dollars earned

The Digital Product organization often finds itself in a precarious position in big companies. They are expected to innovate like a startup but are measured like a cost center. They lack the resources to properly validate the business impact of the features and capabilities they deliver. So they end up prioritising output over impact which is the hallmark of a feature factory. At best, they settle for measures of proximate impact, partially validated by AB tests. However, these results are somewhat removed from downstream business impact. Establishing downstream impact in these places is often more about narrative building and manual data stitching. But then, other functions like marketing may have their own narratives. The "value claims" in these narratives might not add up to the observed business impact.

As a product leader, you will be able to claim value in a more credible manner by taking steps to improve impact intelligence.

Impact Intelligence is the constant awareness of the business impact of your initiatives, in your context, that's mainly in the form of digital product development. It requires an ongoing process of collecting, analyzing, and using data for impact insight, not just customer insight.

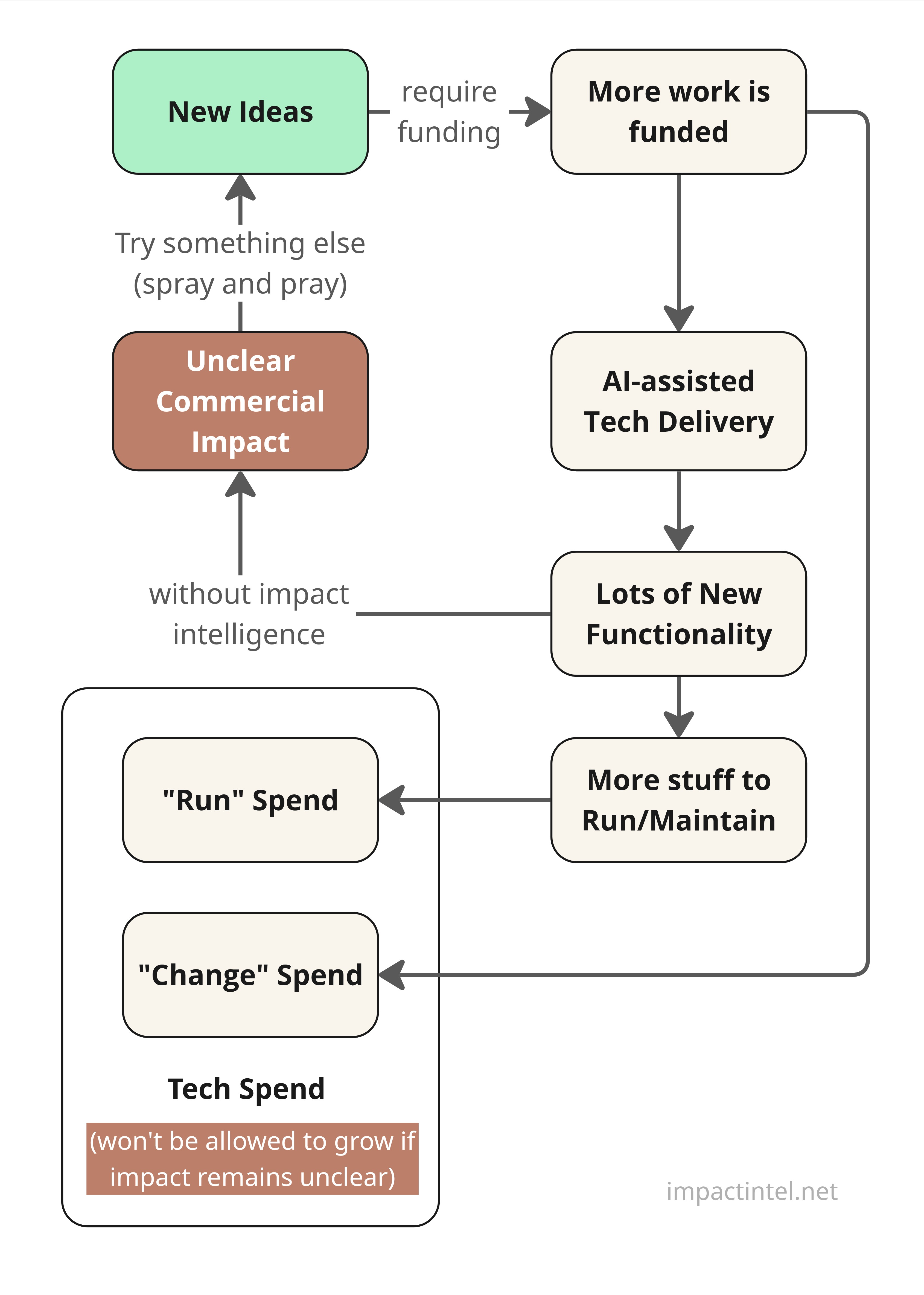

The increasing use of AI for product development makes impact intelligence even more relevant. As teams churn out functionality at an ever faster pace, the problem highlighted in Figure 1 becomes existential. As the volume of functionality to be maintained swells, a greater proportion of tech spend will need to be diverted away from new innovation. The overall pie (tech budget) won't grow unless your stakeholders agree that the expenditure pays off in terms of positive business impact.

Figure 1: Consequences of Unclear Business Impact

Visualize Paths to Business Impact

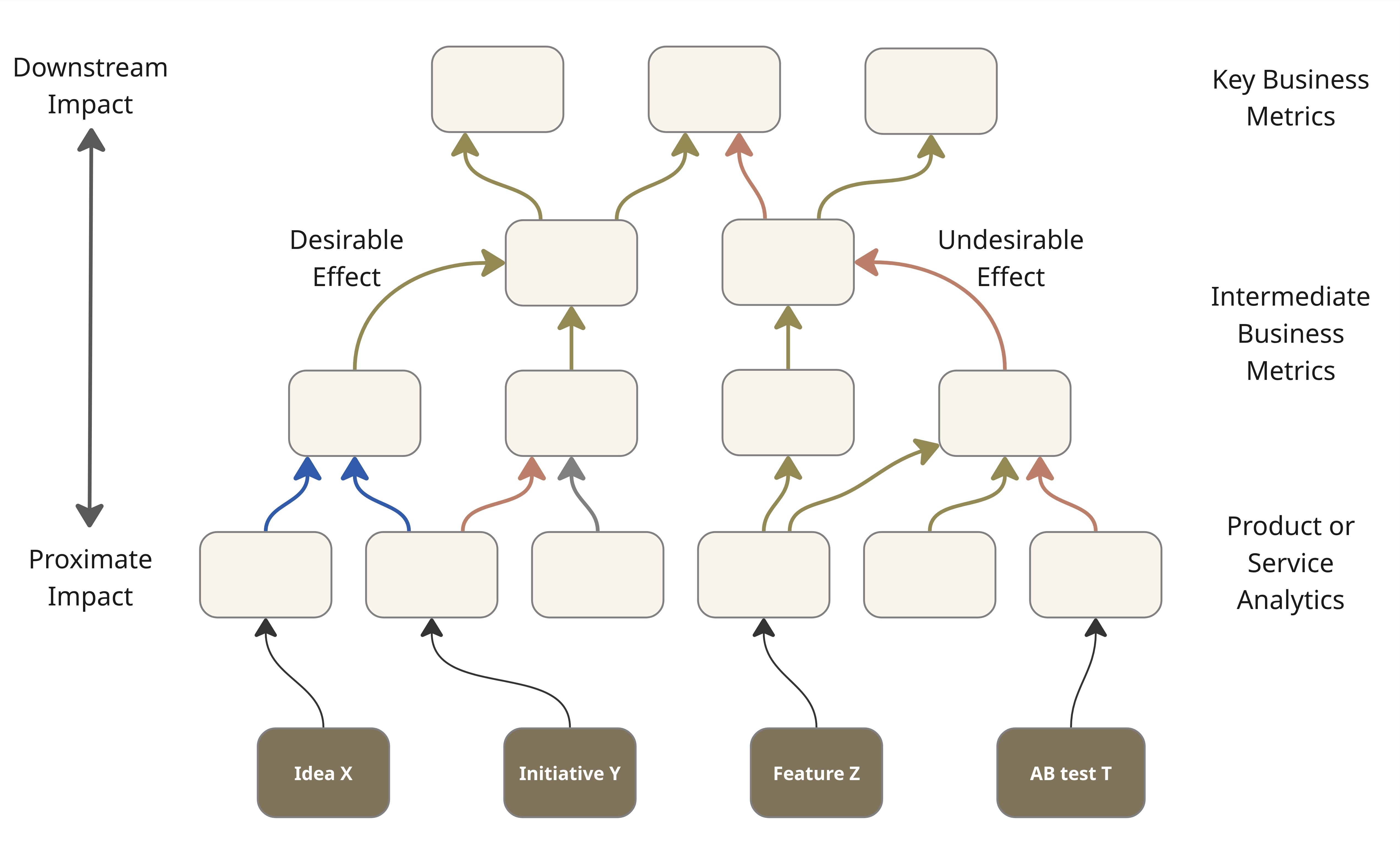

Your stakeholders might not always have the same understanding of how a feature or capability helps move the needle on a downstream metric. Agreement is easier for immediate metrics (product analytics). A visual representation of the contribution paths helps grow shared understanding. Figure 2 illustrates this with the use of a visual that I call an impact network.

It brings out the inter-linkages between factors that contribute to business impact, directly or indirectly. It is a bit like a KPI tree, but it can sometimes be more of a network than a tree. In addition, it follows some conventions to make it more useful. Green, red, blue, and black arrows depict desirable effects, undesirable effects, rollup relationships, and the expected impact of functionality, respectively. Solid and dashed arrows depict direct and inverse relationships. Except for the rollups (in blue), the links don't always represent deterministic relationships. The impact network is a bit like a probabilistic causal model. A few more conventions are laid out in the book.

The bottom row of features, initiatives etc. is a temporary overlay on the impact network which, as noted earlier, is basically a KPI tree where every node is a metric or something that can be quantified. I say temporary because the book of work keeps changing while the KPI tree above remains relatively stable.

Figure 2: An Impact Network with the current Book of Work overlaid.

Typically, the introduction of new features or capabilities moves the needle on product or service metrics only. Their impact on higher-level metrics is indirect and less certain. Direct or first-order impact, called proximate impact, is easier to notice and claim credit for. Indirect (higher order), or downstream impact, occurs further down the line and it may be influenced by multiple factors. The examples to follow illustrate this.

AB tests are commonly deployed to test proximate impact. Under the right conditions, they can sometimes test for downstream impact too. But as you will notice from the examples below, they are not always feasible.

For those familiar with input and output metrics popularised by Amazon, they are somewhat analogous to proximate and downstream impact as defined here. Every link between two nodes in an impact network is potentially a link between an input and an output metric in some context. Note that Amazon insiders use the term Downstream Impact in a slightly different sense. Please refer to the book for more detail.

The rest of this article features smaller, context-specific subsets of the overall impact network for a business.

Example #1: A Customer Support Chatbot

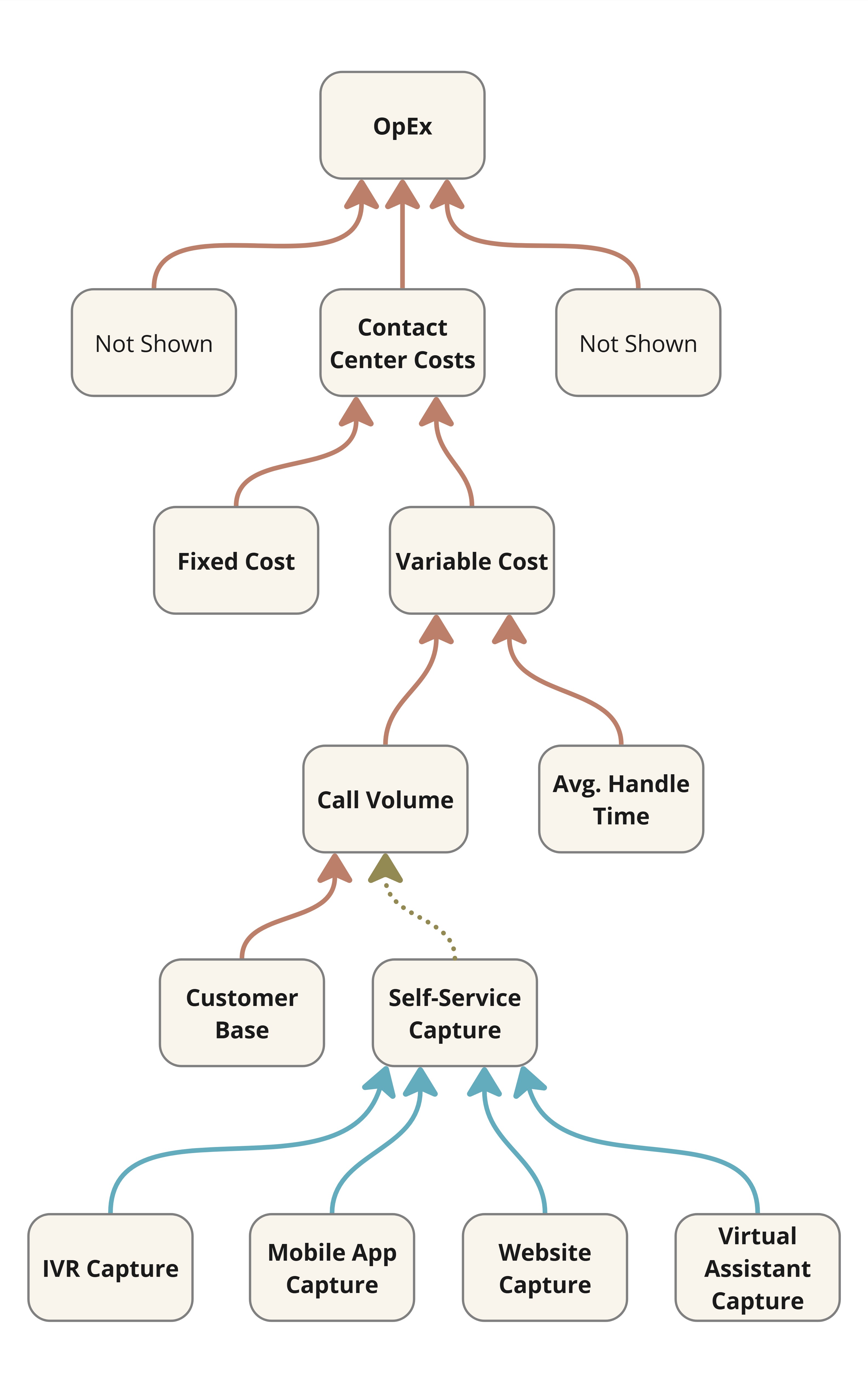

What’s the contribution of an AI customer support chatbot to limiting call volume (while maintaining customer satisfaction) in your contact center?

Figure 3: Downstream Impact of an AI Chatbot

It is not enough anymore to assume success based on mere solution delivery. Or even the number of satisfactory chatbot sessions which Figure 3 calls virtual assistant capture. That’s proximate impact. It’s what the Lean Startup mantra of build-measure-learn aims for typically. However, downstream impact in the form of call savings is what really matters in this case. In general, proximate impact might not be a reliable leading indicator of downstream impact.

A chatbot might be a small initiative in the larger scheme, but small initiatives are a good place to exercise your impact intelligence muscle.

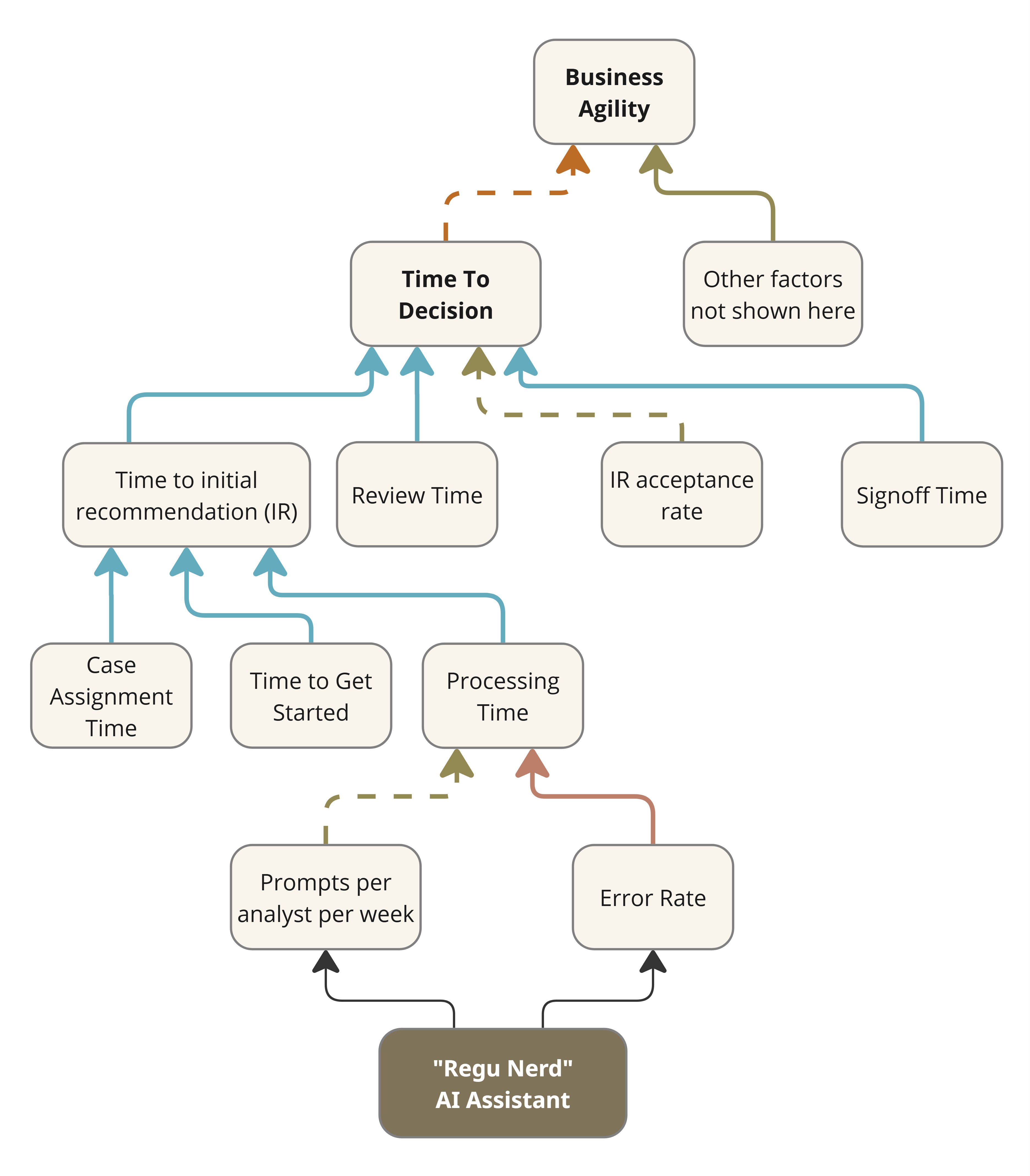

Example #2: Regulatory Compliance AI assistant

Consider a common workflow in regulatory compliance. A compliance analyst is assigned a case. They study the case, its relevant regulations and any recent changes to them. They then apply their expertise and arrive at a recommendation. A final decision is made after subjecting the recommendation to a number of reviews and approvals depending on the importance or severity of the case. The Time to Decision might be of the order of hours, days or even weeks depending on the case and its industry sector. Slow decisions could adversely affect the business. If it turns out that the analysts are the bottleneck, then perhaps it might help to develop an AI assistant (“Regu Nerd”) to interpret and apply the ever-changing regulations. Figure 4 shows the impact network for the initiative.

Figure 4: Impact Network for an AI Interpreter of Regulations

Its proximate impact may be reported in terms of the uptake of the assistant (e.g., prompts per analyst per week), but it is more meaningful to assess the time saved by analysts while processing a case. Any real business impact would arise from an improvement in Time to Decision. That’s downstream impact, and it would only come about if the assistant were effective and if the Time to initial recommendation were indeed the bottleneck in the first place.

Example #3: Email Marketing SaaS

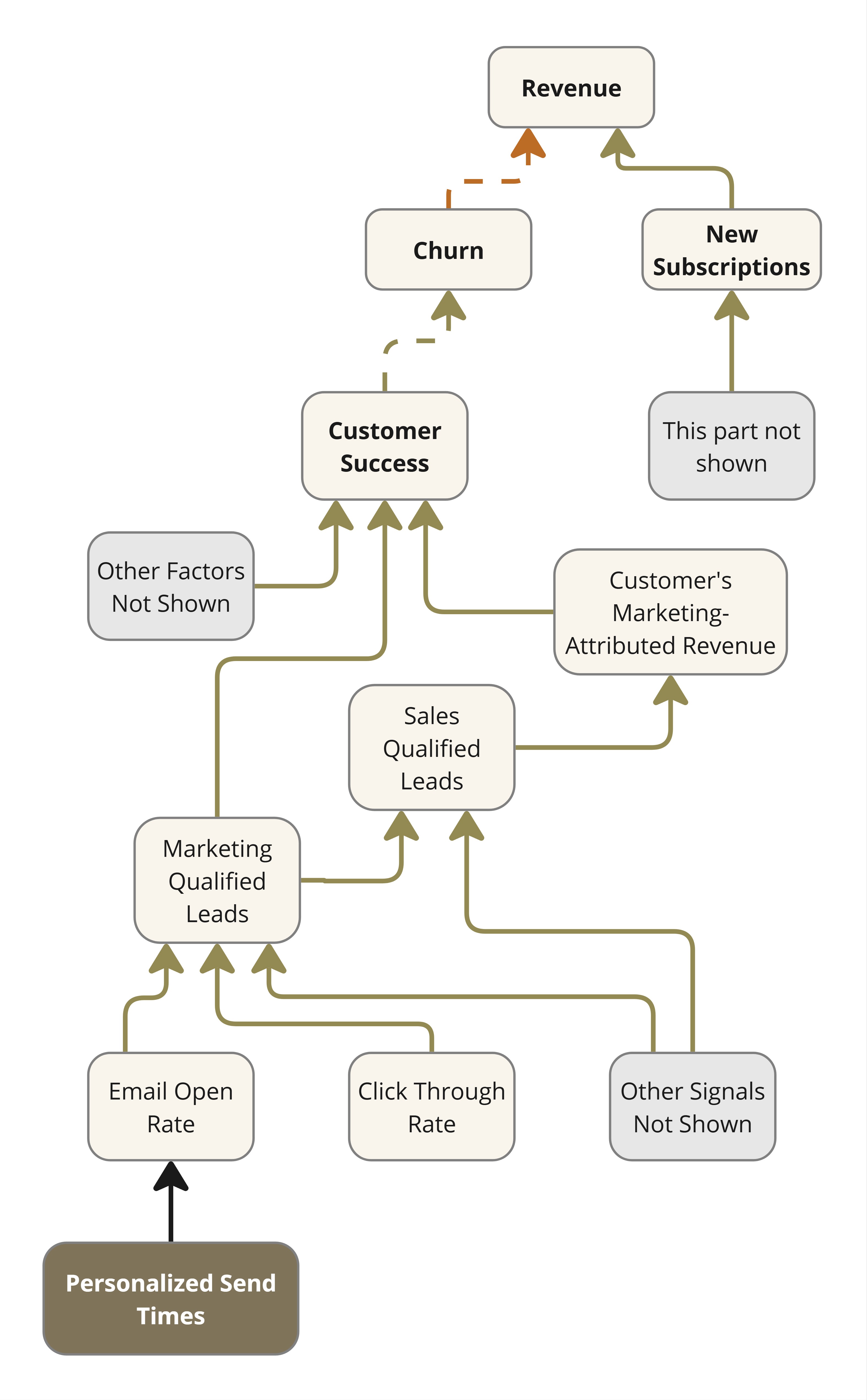

Consider a SaaS business that offers an email marketing solution. Their revenue depends on new subscriptions and renewals. Renewal depends on how useful the solution is to their customers, among other factors like price competitiveness. Figure 5 shows the relevant section of their impact network.

Figure 5: Impact Network for an Email Marketing SaaS

The clearest sign of customer success is how much additional revenue a customer could make through the leads generated via the use of this solution. Therefore, the product team keeps adding functionality to improve engagement with emails. For instance, they might decide to personalize the timing of email dispatch as per the recipient’s historical behavior. The implementation uses behavioral heuristics from open/click logs to identify peak engagement windows per contact. This information is fed to their campaign scheduler. What do you think is the measure of success of this feature? If you limit it to Email Open Rate or Click Through Rate you could verify with an A/B test. But that would be proximate impact only.

Actions to Improve Impact Intelligence

Action #1: Improve Demand Management

Product may own idea triaging and prioritization, but the ideas often come from the leaders of marketing, sales, operations, country heads, and so on. These ideas are not always well documented. Whether it takes the form of a business case or a justification slide deck, a well documented idea ought to answer all the questions in the Idea Questionnaire shared here.

Idea questionnaire

An idea is adequately documented if it answers the following questions unambiguously.

- What problem is this idea trying to address? Or what opportunity does this idea aim to exploit?

- What’s the evidence that the problem/opportunity exists?

- What’s the proposed solution/approach? Who’s championing it?

- What alternative solutions were considered and set aside? Why?

- What metric (proximate and downstream) is this solution likely to

improve?

- By how much and in what time frame?

- How confident are you about this? On what basis?

- Under what assumptions and dependencies?

- How is impact verifiable? Do we have the measurement capability in place?

- By how much and in what time frame?

- What’s the risk of not doing this? What’s the likelihood of the risk?

A commonly understood impact network helps answer some of the above questions. Answering the above makes for SMART (Specific, Measurable, Achievable, Relevant, Time-bound) ideas. Else they might be VAPID (Vague, Amorphous, Pie-in-the-sky, Irrelevant, Delayed). It is impossible to validate the business impact of VAPID ideas post tech delivery. When the idea originators are unable to answer a question, it is essential to capture that such is the case. At least we'll know how often Product is forced to prioritise ill-documented VIP requests.

Note that adequately documented does not necessarily mean well justified. The merit or strength of an idea is still determined as part of idea triage.

This approach will have its detractors, especially among those at the receiving end of the idea questionnaire. They might protest that it is a form of gatekeeping. You must take the lead in explaining why it is necessary. A later section provides some guidance on how you could go about this. For now, I’ll only list the common objections.

- This will slow us down. We can’t afford that.

- It’s not agile to consider all this upfront.

- Innovation isn’t predictable.

- Our PMO/VMO already takes care of this

- This isn't collaborative.

- We don’t have the data.

The last one is more than an objection if it is a fact. It can be a showstopper for impact intelligence. It warrants immediate attention.

We Don’t Have The Data

Data is essential to answer the questions in the Idea Questionnaire. Demand generators might protest that they don’t have the data to answer some of the questions. What’s a product leader to do now? At the very least you could start reporting on the current situation. I helped another client come up with a rating for the answers. Qualifying requests were rated on a scale of inadequate to excellent based on the answers to the questionnaire. The idea is to share monthly reports of how well-informed the requests are. They make it visible to COOs and CFOs how much product development bandwidth is committed to working on mere hunches. Creating awareness with reports is the first step.

Awareness of gaps brings up questions. Why do we lack data? Inadequate measurement infrastructure is a common reason. Frame it as measurement debt so that it gets at least as much attention and funding as technical debt.

An organization takes on measurement debt when it implements initiatives or develops digital capabilities without investing in the measurement infrastructure required to validate the benefits they deliver.

Action #2: Pay Down Measurement Debt

Measurement debt is best addressed through a measurement improvement program. It comprises a team tasked with erasing blind spots in the measurement landscape. But it would require separate funding, which means you might need to convince your COO or CFO. If that’s not feasible, consider doing it yourself.

Take the lead in reducing measurement debt. Measurement capability (telemetry) must be built alongside new functionality, not as an afterthought. Ensure to only fill the gaps in measurement and integration. No need to duplicate what might already be available through third party analytics tools that you might already have in place. Measurement debt reduction might be easier if there's a product operations team in place. Developers might be able to work with them to identify and address gaps more effectively.

Measurement Debt reduction is all the more feasible now thanks to AI-assisted coding. The excuse to defer telemetry in order to meet functionality deadlines is no longer tenable.

Read more about measurement debt here

Action #3: Introduce Impact Validation

When you adopt impact measurement as a practice, it allows you to maintain a report as shown in the table below. It provides a summary of the projection vs. performance of the efforts we discussed earlier.

You now have the opportunity to conduct an impact retrospective. The answer to the question, “By how much and in what time frame” (item 3(b)(i) in the Idea Questionnaire), allows us to pencil in a date for a proximateimpact retrospective session. The session is meant to discuss the difference between projection and performance, if any. In case of a deficit, the objective is to learn, not to blame. This informs future projections and feeds back into improved demand management.

| Feature/Initiative | Metric of Proximate Impact | Expected Value or Improvement | Actual Value or Improvement |

|---|---|---|---|

| Customer Support AI Chatbot | Average number of satisfactory chat sessions per hour during peak hours. | 2350 | 1654 |

| “Regu Nerd” AI Assistant | Prompts per analyst per week | > 20 | 23.5 |

| Time to initial recommendation | -30% | -12% | |

| Email Marketing: Personalized Send Times | Email Open Rate | 10% | 4% |

| Click Through Ratio | 10% | 1% |

It's okay if, in the first year of rollout, the actuals are much weaker than what was expected. It might take a while for idea champions to temper their optimism when they state expected benefits. It should have no bearing on individual performance assessments. Impact intelligence is meant to align funding with portfolio (of initiatives) performance.

Impact measurement works the same for downstream impact, but impact validation works differently. This is because unlike proximate impact, downstream impact may be due to multiple factors. The table below illustrates this for the examples discussed earlier. Any observed improvement in the downstream metric cannot be automatically and fully attributed to any single improvement effort. For example, you might notice that call volume has gone up by only 2.4% in the last quarter despite a 4% growth in the customer base. But is it all due to the customer support chatbot? That requires further analysis.

| Feature/Initiative | Metric of Downstream Impact | Expected Improvement | Observed Improvement (Unattributed) | Attributed Improvement |

|---|---|---|---|---|

| AI Chatbot | Call Volume (adjusted for business growth) | -2% | -1.6% | ? |

| “Regu Nerd” AI Assistant | Time to Decision | -30% | -5% | ? |

| Email Marketing: Personalized Send Times | MQL | 7% | 0.85% | ? |

| Marketing-Attributed Revenue | 5% | Not Available | ? |

Retrospectives for downstream impact are meant to attribute observed improvements to the initiatives at play and to other factors. This is called contribution analysis. They are best scheduled monthly or quarterly, convened by a business leader who has a stake in the downstream metric in question. Make sure that the measurements are in place for the retrospective to take place, should the business leader so choose.

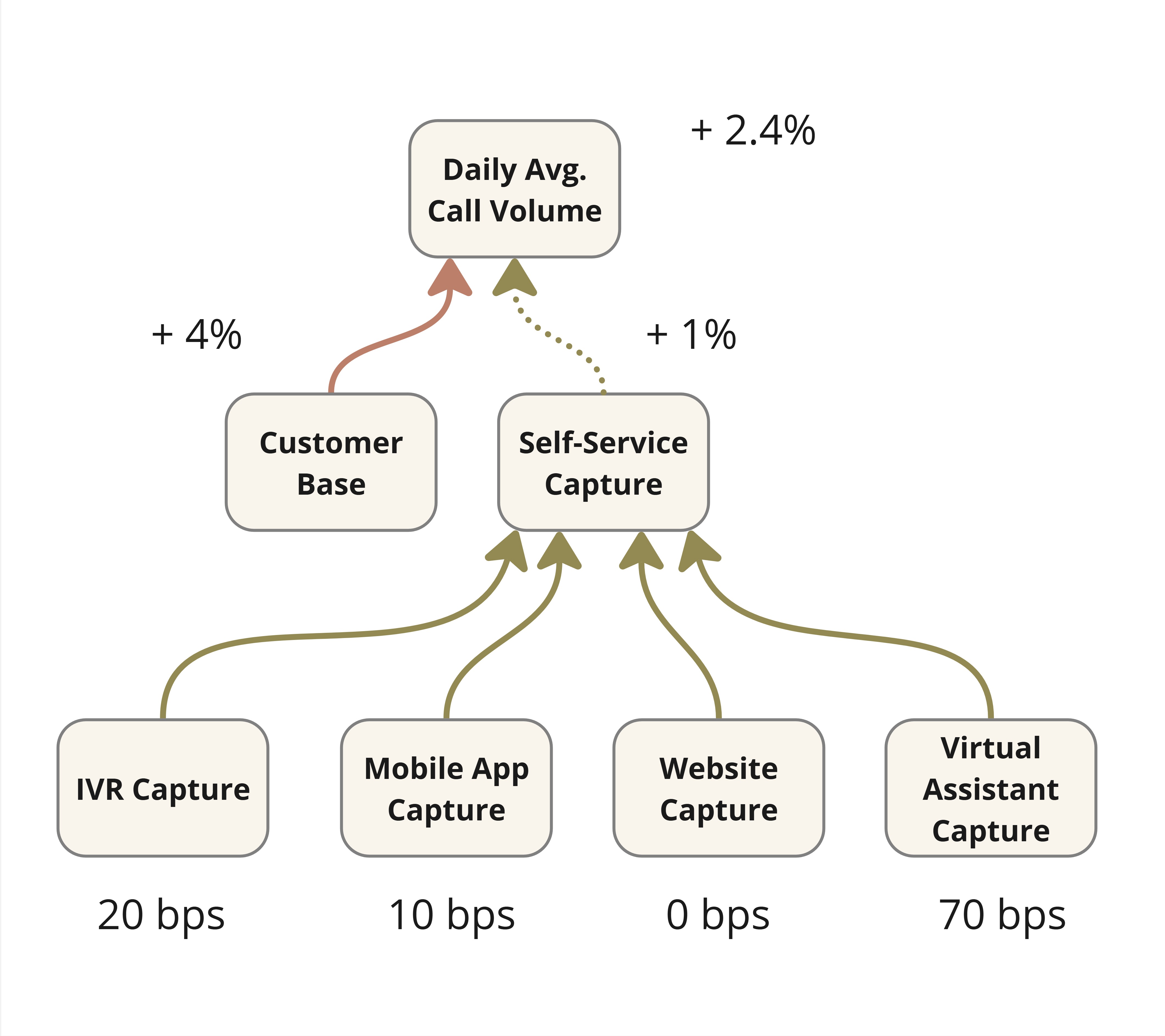

For the sake of completeness, Figure 7 shows what the results of a downstream impact retrospective might look like for the example of the customer support chatbot.

It shows that call volumes only rose by 2.4% quarter-on-quarter despite a 4% growth in the customer base. The model assumes that if nothing else changes, the change in call volume should match the change in the customer base. We see a difference of 1.6 percentage points or 160 basis points. How do we explain this? Your data analysts might inform you that 60 bps is explained by seasonality. We credit the rest (100 bps) to self-service channels and ask them to claim their contributions. After a round of contribution analysis, you might arrive at the numbers at the bottom. You could use some heuristics and simple data analysis to arrive at this. I call it Simple Impact Attribution to contrast it with more rigorous methods (e.g., controlled experiments) that a data scientist might prefer but which might not always be feasible.

Figure 7: Example of Impact Attribution

Action #4: Offer your CFO/COO an alternative to ROI

These days, no one knows the ROI (return on investment) of an initiative. Projections made to win approval might not be in strict ROI terms. They might just say that by executing initiative X, some important metric would improve by 5%. It is not possible to determine ROI with just this information. But with the results of impact validation in place as above, you might be able to calculate the next best thing, the Return on Projection (ROP). If the said metric improved by 4% as against the projected 5%, the ROP, also called the benefits realization ratio, is 80%. Knowing this is way better than knowing nothing. It’s way better than believing that the initiative must have done well just because it was executed (delivered) correctly.

ROP is a measure of projection vs. performance. Encourage your COO/CFO to make use of past ROP to make better investment decisions in the next round of funding. Asking for a thorough justification before funding is good, but they are based on assumptions. A projection is invariably embedded in the justification. If they only decide based on projections, it incentivizes people to make unrealistic projections. Business leaders may be tempted to outdo each other in making unrealistic projections to win investment (or resources like team capacity). After all, there is no way to verify later. That’s unless you have an impact intelligence framework in place. The book has more detail on how to aggregate and use this metric at a portfolio level. Note that we are not aiming for perfect projections at all. We understand product development is not deterministic. Rather, the idea is to manage demand more effectively by discouraging unrealistic or unsound projections. Discourage spray and pray.

Action #5: Equip Your Teams

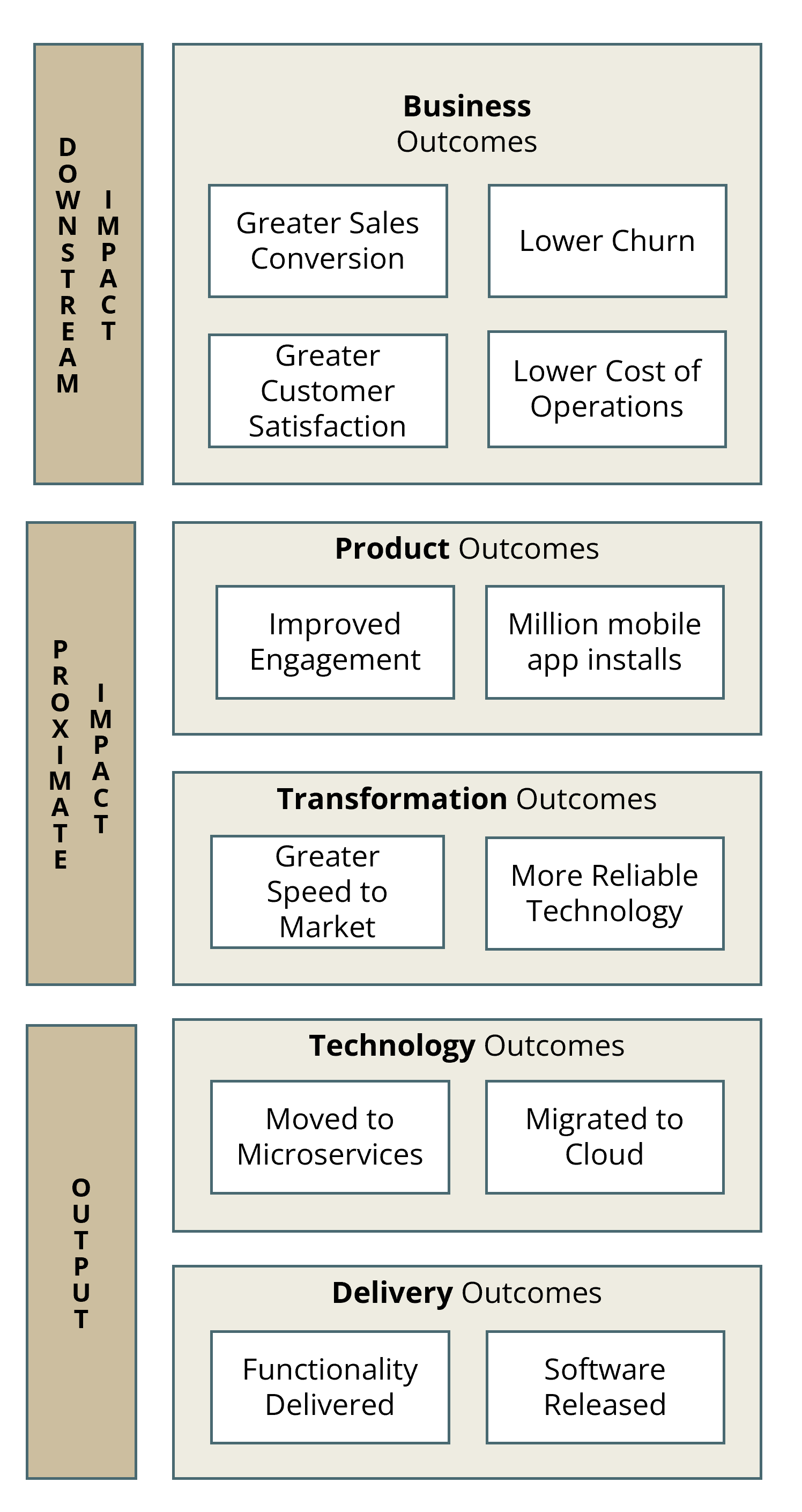

It can feel lonely if you are the only senior exec advocating for greater impact intelligence. But you don’t have to run a solo campaign. Help your teams understand the big picture and rally them around to your cause. Help them appreciate that product development does not automatically imply business impact. Even feature adoption does not. Start by helping them understand the meaning of business impact in different contexts. I have found it useful to explain this with an illustration of a hierarchy of outcomes as in Figure 8 The ones at the top are closest to business impact. The lower-level outcomes might support or enable the higher-level outcomes, but we should not take that for granted. Impact intelligence is about tracking that the supposed linkages work as expected. When your teams internalize this hierarchy, they’ll be able to help you improve demand management even more. They’ll begin to appreciate your nudges to reduce measurement debt. They’ll start asking their business colleagues and leaders about the business impact of functionality that was delivered.

Figure 8: A hierarchy of outcomes

Objections

The action suggested first, improving demand management, is key to the other four suggested actions. As noted earlier, it might encounter resistance from the people at its receiving end. Here's how to address five common objections to answering the idea questionnaire.

Objection #1: We can’t slow down

Detractors commonly push back against the idea questionnaire by saying, “We don’t have the time to answer these questions. Let’s ship it already.” That’s a mad trade-off of accuracy for speed. Accuracy, as in preparing well to achieve the desired impact. Neglecting it for speed is exactly what Figure 1 illustrates as the spray-and-pray dysfunction, a scattershot approach that is ultimately unsustainable. Spray-and-Pray implies a lack of precision and a reliance on luck rather than skill or strategy. Anything that requires skill and strategy must be learnt for accuracy first and for speed later. When accuracy is lacking, it helps the cause of business impact if you slow down a bit to gain accuracy. Think of it like playing chess.

Objection #2: It’s Not Agile

Sometimes, the idea originators (or their deputies) look at all the questions in the Idea Questionnaire and go, “It’s too much upfront analysis! It’s not agile.” Well, we are not getting deep into the solution. We are just documenting the hypothesis well. Agile doesn’t mean you jump out of the airplane and figure out how and where to land while you are mid-air. It is perfectly okay to plan and then iterate.

Besides, there usually are lots of ideas competing for limited product development bandwidth which, as noted earlier, is an expensive business resource. The size of your product backlog is an indicator of the volume of demand. Therefore, it is important to shortlist carefully.

The Agile Manifesto bats for working software over comprehensive documentation but that is not about documenting the rationale for developing said software. Working software doesn't always result in business impact, unfortunately. Neither do we run afoul of the principle of responding to change over following a plan. The Idea Questionnaire is not a plan.

Objection #3: Innovation Isn’t Predictable

Idea champions might protest that they can't be sure of the benefits early on. Then let’s stop pretending otherwise at the time of prioritization and scheduling. Let’s not make unrealistic projections just to get in front of the line. If they believe in their projections, let’s document these beliefs via the questionnaire and revisit them post delivery. If we want to go ahead and build functionality even when we have no credible information as to their benefit, let’s record that too. Those who sign the cheques ought to know how much of their funding is for shots in the dark, or even in a fog.

It's not about eliminating failure either. Failure is a part of innovation. My point is that the Classic Enterprise often does not even realize that an initiative has failed to deliver adequate business impact. If they did, they would decommission what was built and thereby avoid tech bloat (run costs) on that account.

Objection #4: Our PMO/VMO already takes care of this

No, they don't. They might have an idea justification template, but they don't have the means or the mandate to verify impact after delivery. Besides, their template might lack pointed questions, or they might be resigned to accepting vague answers. Sometimes, they dubiously report benefits realized in terms of work completion or money spent. As in, if we have delivered the functionality or spent the money, we must have realized the expected business impact!

On the other hand, if they truly have an equivalent questionnaire in place, and it is filled out properly before work arrives at your doorstep, use it by all means to carry out the other suggested actions. No need to duplicate.

Objection #5: This isn't Collaborative

Change is hard. As a reformist product leader, you are trying to do what you can to make a real difference, but you might be accused of not being collaborative. Those used to getting their whims prioritized (and scheduled) might complain that you are being an unauthorized gatekeeper. This is why you should seek the blessings of your COO/CFO prior to embarking on this journey of reform.

One more thing. Although I introduced the term in this article for the sake of clarity, you should perhaps not use the phrase Improved Demand Management when you socialize or introduce it to business leaders. Consider calling it Verifiable Ideas or Ideas with Full Disclosure.

Act Now

You don't have to feel helpless about leading a feature factory. It is in your (and the company’s) interest that you take the lead and act. Improve demand management, pay down measurement debt, introduce impact validation and share reports of projection vs. performance. Equip your teams to work toward business impact. Once you start, you should be able to alleviate backlog pressure. As these changes take hold, you and your stakeholders should begin to notice better and stronger connection between features shipped and dollars earned.

The actions suggested aren't easy. They might even seem daunting enough that you'd prefer to deal with the backlog pressure than attempt being a reformist product leader. But then, you might never be able to speak to true business impact. And the C-Suite Core will always view your role as executional: focussed on product delivery. No shame in that, unless you believe you can do better.